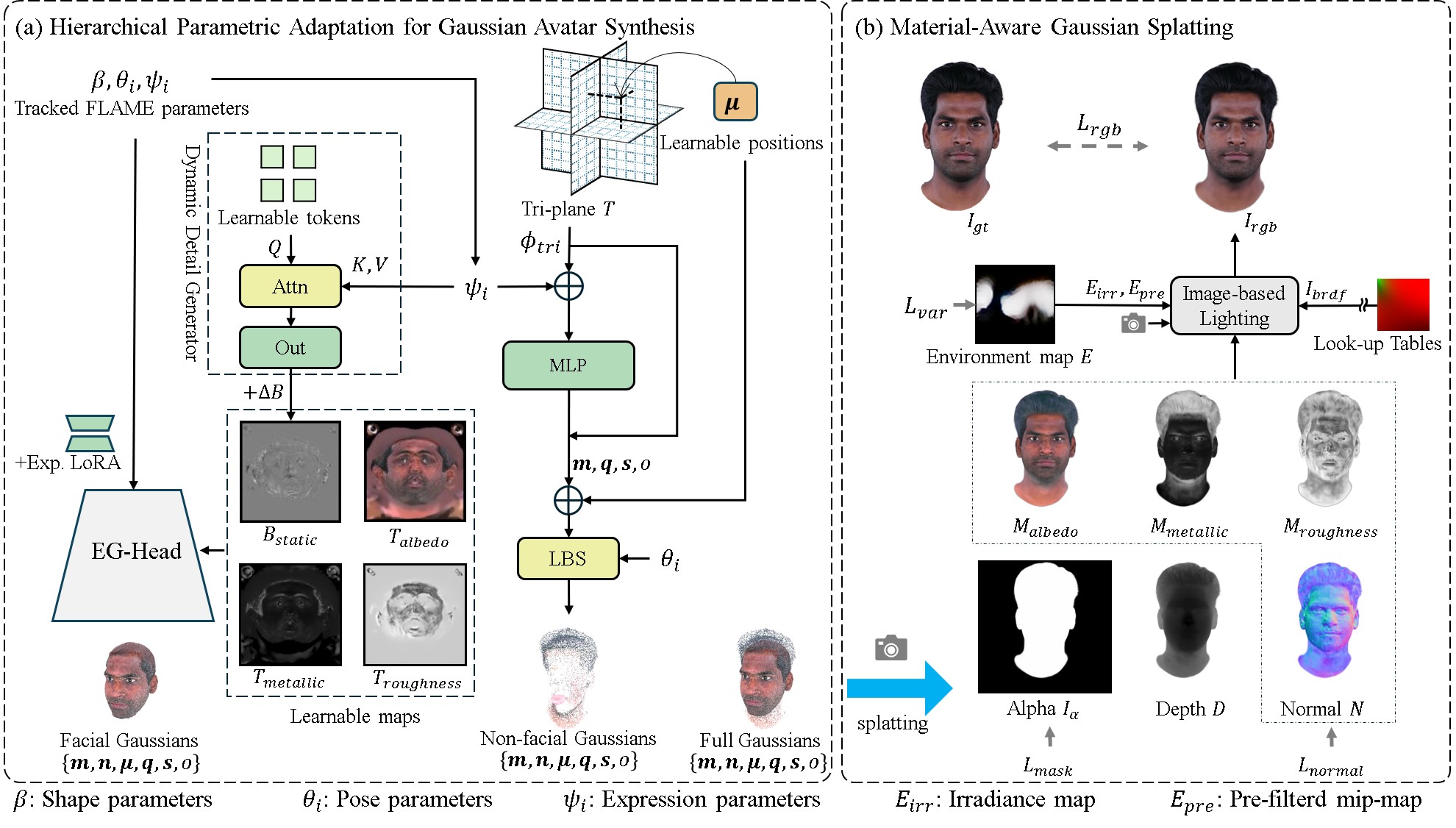

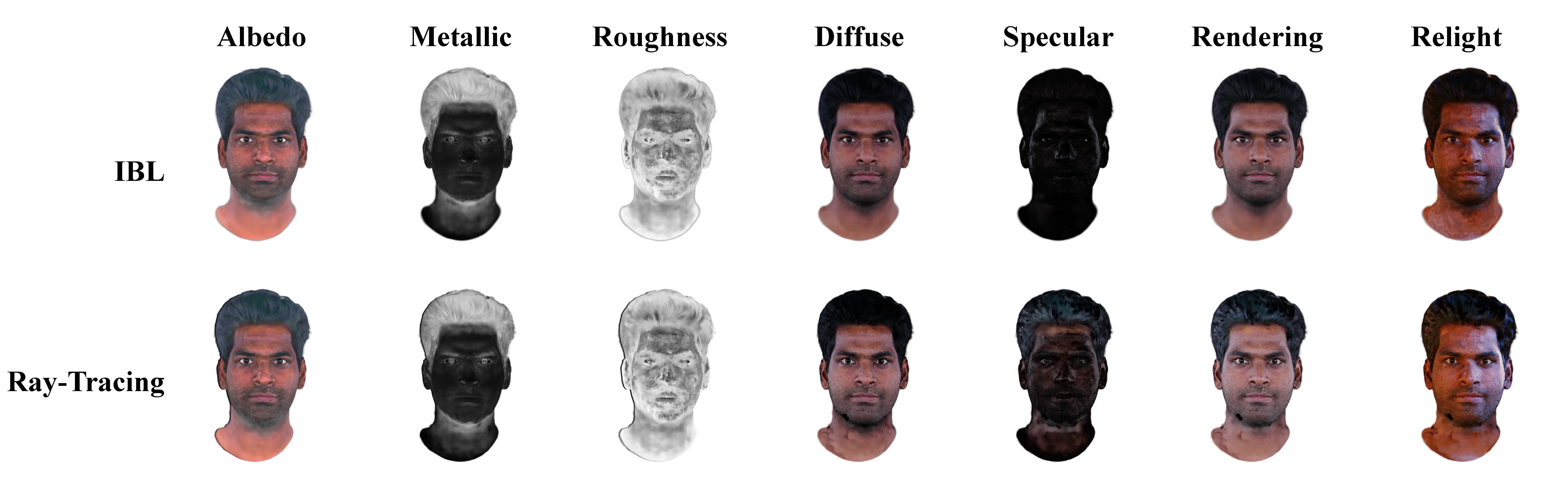

Recent advances in 3D head avatar generation combine 3D Gaussian Splatting (3DGS) with 3D Morphable Models (3DMM) to reconstruct animatable avatars from monocular video inputs. However, existing approaches exhibit two critical limitations: (1) prohibitive storage requirements from per-primitive animation parameters and spherical harmonics (SH) coefficients, and (2) compromised facial fidelity due to insufficient dynamic detail modeling. To address these challenges, we propose PBR-GAvatar, a novel framework featuring two key innovations: First, we develop hierarchical parametric adaptation that combines coarse 3DMM basis refinement via Low-Rank Adaptation (LoRA) with a lightweight Dynamic Detail Generator (DDG) producing expression-conditioned details. Second, we introduce a material decomposition paradigm that replaces SH coefficients with compact Physically Based Rendering (PBR) textures. We implement unified optimization of geometry, dynamics, and material properties through differentiable rendering. The proposed framework achieves a 20$\times$ size reduction (under 10MB) compared to state-of-the-art methods, while demonstrating superior reconstruction fidelity on INSTA and GBS benchmarks. The PBR material system not only reduces storage demands but also enables photorealistic relighting under arbitrary illumination conditions. Our implementation will be publicly at https://liguohao96.github.io/PBR-GAvatar.

@misc{li2025pbrgavatar,

title = {Learning Storage-Efficient 3D Gaussian Avatars from Monocular Videos via Parametric Adaptation and Material Decomposition},

author = {Guohao Li and Hongyu Yang and Di Huang and Yunhong Wang},

booktitle = {},

year = {},

}